🍌 Google Nano Banana 2 Is Here — Pro Quality at Flash Speed

On February 26, 2026, Google DeepMind officially launched Nano Banana 2 (technically Gemini 3.1 Flash Image) — the most strategically significant AI image generation model yet. The new model combines studio-quality capabilities of Nano Banana Pro with Flash-tier speed and pricing, effectively halving image generation costs while retaining near-Pro fidelity.

Nano Banana 2 is now the default image generator across the Gemini app, Google Search in 141 new countries, Google Ads, and Google’s creative studio Flow — instantly placed in front of potentially billions of users.

The pricing math reshapes the enterprise decision

Text token costs tell an even more dramatic story: $0.50 per million input tokens versus ~$2.00 for Pro, and $3.00 per million output tokens versus ~$12.00 — a 70-80% reduction. At standard 1K pricing, Nano Banana 2 sits above DALL-E 3 ($0.04) and Flux Pro ($0.04-0.05), but its batch mode pricing ($0.034) undercuts both. Apiyi.com Blog It's the only major model offering native 4K generation at $0.151 per image.

As VentureBeat's enterprise analysis put it: "The question is no longer whether AI image models are good enough for production — it's which vendor's cost curve best fits the workflow." VentureBeat Partner HubX reported a 74-76% reduction in latency after integrating Nano Banana 2, making face-editing workflows 4x faster without sacrificing Pro-level quality.

📖 From Viral Sensation to Production Workhorse in 6 Months

The original Nano Banana appeared anonymously on LMArena in August 2025, achieving the largest Elo score lead in Arena history — 171 points — attracting 5 million votes in two weeks. Three months later, Nano Banana Pro arrived with 4K support and 10/10 prompt adherence, but at $0.134 per image. Nano Banana 2 resolves this tension — delivering Pro’s feature set at Flash speeds for just $0.067 per image.

What’s New: Key Features

Image Search Grounding — the standout new capability. Nano Banana 2 can pull real-time information from Google Search to generate more accurate images of landmarks, products, and public figures. No competitor offers this. Google’s demo app “Window Seat” generates photorealistic views from any location worldwide using live weather data.

Configurable Thinking Levels allow developers to balance quality vs. latency. Setting thinking to “High” or “Dynamic” enables the model to plan, generate, review, and correct before outputting — a full self-review architecture.

Key specs: 512px to 4K resolution across 14 aspect ratios, character consistency for up to 5 characters and 14 objects, multilingual text rendering, and a 131,072-token context window.

Pricing: Up to 50% Cheaper Than Pro

Nano Banana 2 dramatically undercuts Nano Banana Pro. At 1K resolution, it costs $0.067 vs Pro’s $0.134 (50% cheaper). At 4K, it’s $0.151 vs Pro’s $0.240 (37% cheaper). Batch mode drops to just $0.034/image — undercutting DALL-E 3. Text token costs fall by 70–80% vs Pro. Partner HubX reported workflows running 4x faster with 74–76% lower latency.

⚡ Speed: The x4 Faster Claim Explained

With thinking enabled, Nano Banana 2 generates images in under 20 seconds on LMArena. Competitors: GPT-Image-1.5 takes ~50 seconds, Grok Imagine Image Pro exceeds 85 seconds. That’s a 2.5–4x real-world speed advantage. Combined with lower API overhead, the effective workflow speed is up to 4x faster end-to-end.

🆚 Competitive Comparison

vs. GPT-Image-1.5: Nano Banana 2 wins on text rendering, multilingual support, character consistency, and speed (~15s vs ~50s).

vs. Midjourney v7: Midjourney wins for cinematic artistic output. Nano Banana 2 wins on photorealism, editing accuracy (95%+), and multi-turn conversational workflows.

vs. Flux 2: Both deliver strong photorealism. Nano Banana 2 adds logic-first reasoning and corrective passes via its Gemini backbone.

vs. Seedream 5 / Qwen-Image-2.0: Chinese rivals offer ~$0.035/image. Google counters with distribution depth, Search grounding, and ecosystem integration.

🌍 Availability

Nano Banana 2 is live as default across: Gemini App (all tiers), Google Search in 141 countries, Google Flow (free), Google Ads, and developer platforms including AI Studio, Gemini API, Vertex AI, and Firebase AI Logic. Every image carries a SynthID watermark and C2PA credentials.

🏆 Expert Verdict

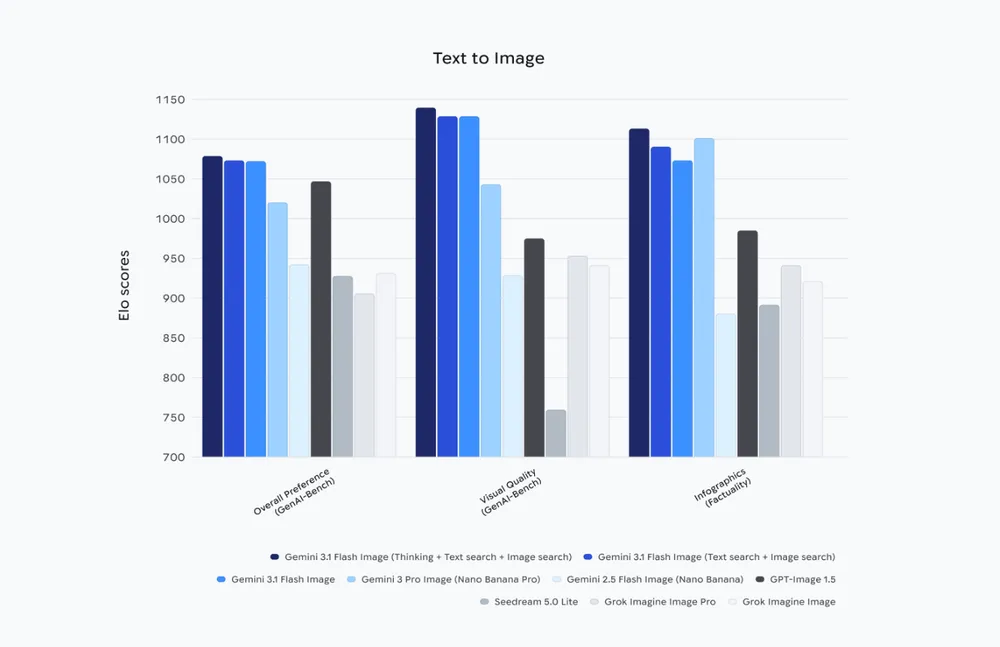

The Decoder calls it “a solid alternative at a fraction of the cost.” On LMArena, it leads all models for both image editing (~1065 Elo) and text-to-image (~1080 Elo) with thinking enabled. The real revolution isn’t the model — it’s the price and speed that puts Pro-quality AI imagery within reach of everyone.

World Knowledge Built Into Every Pixel

Most image models generate from imagination. Nano Banana 2 generates from knowledge.

It taps directly into Gemini's broad world knowledge and live web image search to ground its outputs in real-world references. Ask it to render the Hagia Sophia at golden hour, a specific product on a real shelf, or a street corner in Tokyo during rain — and it doesn't guess. It looks it up, then renders with that reference baked in.

Google built a showcase demo called "Window Seat" to prove the point. Give it any location on earth and it produces a photorealistic window view from that exact spot — factoring in live weather data to nail the lighting, atmosphere, and mood. The output isn't just visually plausible. It's visually accurate.

No other image generation model on the market offers this capability today.

✍️ Text Rendering That Finally Works

Text in AI-generated images has been the industry's embarrassing open secret for years — garbled letters, inconsistent fonts, characters that almost look right. Nano Banana 2 fixes this.

Text now renders with the same precision as the surrounding artwork. Sharp, correct, consistent — whether it's a headline, a label, a UI element, or a sign in the background of a scene.

More importantly, it supports in-image localization. You can generate or translate text within the image across multiple languages simultaneously. Not just swapping a string — actually re-rendering the visual so the text fits naturally in the layout, the typography matches the design, and the surrounding visuals are adapted to the target market too.

Google's demo app "Global Ad Localizer" makes this tangible: take one advertisement, feed it to Nano Banana 2, and get back versions localized for every international market — text translated, visuals adapted, all in one pass.

This is a genuine unlock for any team building dynamic UI generators, international marketing tools, or multilingual creative platforms.

🎛️ Greater Creative Control — The Features That Actually Matter

Nano Banana 2 ships with several precision controls that previous Flash-tier models simply didn't have:

New aspect ratios — In addition to all existing ratios, you now get native support for ultra-wide and ultra-tall formats: 4:1, 1:4, 8:1, and 1:8. If you're building for unconventional canvases — billboard layouts, vertical story formats, panoramic displays — it handles them natively without post-crop hacks.

New 512px resolution tier — Joining the existing 1K, 2K, and 4K options, this new lower tier is built for speed. It's the right choice for rapid iteration pipelines, high-volume batch workflows, or any use case where you're generating hundreds of variants and need the lowest possible latency per image.

Stronger instruction following — The model adheres significantly more strictly to complex, multi-layered prompts. If your application sends detailed compositional instructions — specific positions, lighting conditions, color constraints, multiple subjects — Nano Banana 2 follows them. What your prompt requests is what gets generated.

Configurable thinking levels — This is the most interesting control for developers. You can now set the model's reasoning depth before it renders. At Minimal (the default), it generates fast. At High or Dynamic, it plans the image, generates a draft, reviews it internally for prompt adherence, corrects, and then outputs. The quality difference on complex prompts is significant — and you control the tradeoff per request based on your use case.

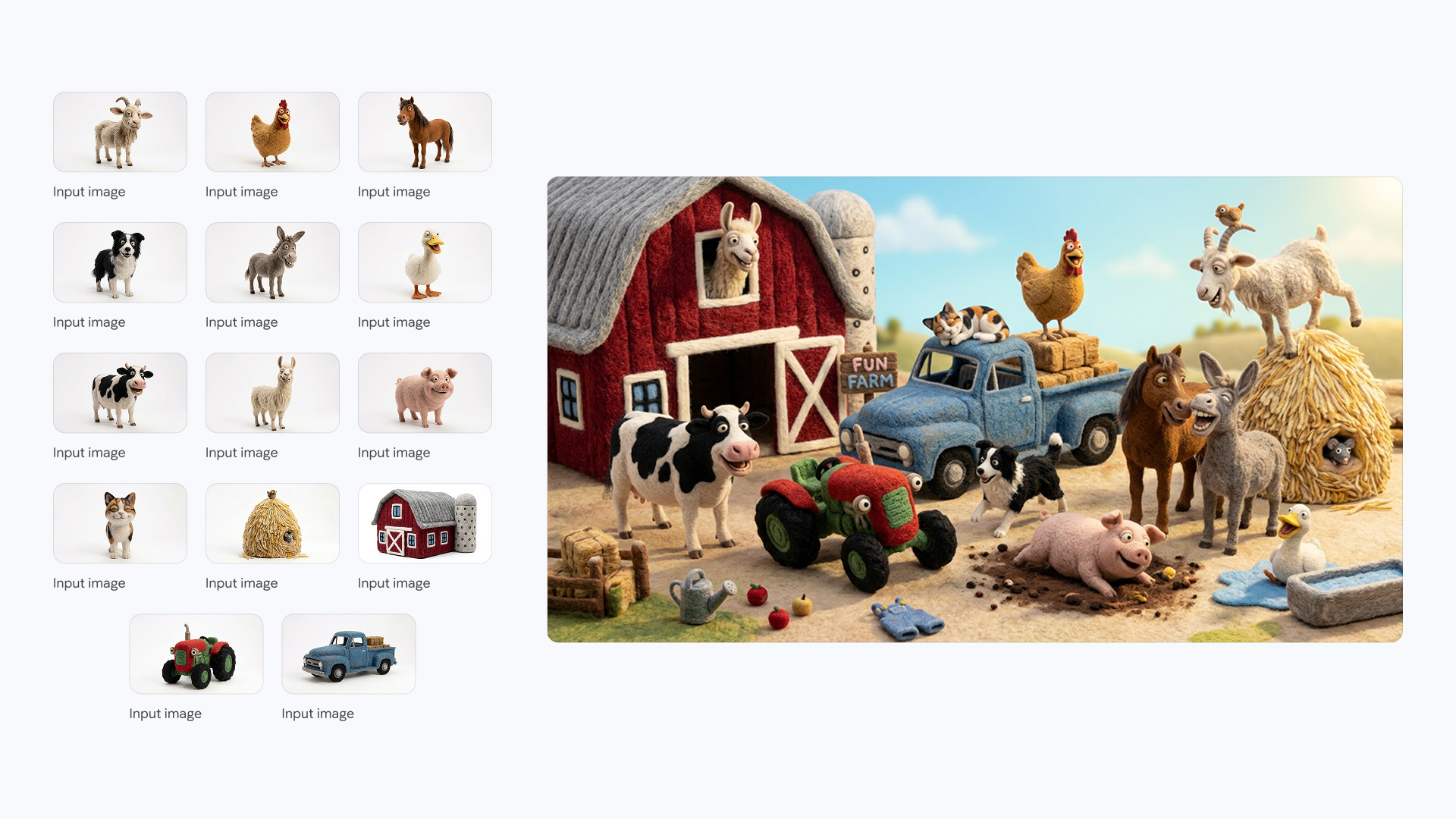

Google's "Pet Passport" demo shows all of this working together: take one photo of your pet, and the app sends them on a world tour — generating them at the Eiffel Tower, on a Tokyo street, against the Santorini cliffs — while maintaining exact visual consistency of the animal across every scene. Custom creative controls let you tune the style and output for each destination.

🏭 What Production Partners Are Already Building

The model didn't just go live for demos. Partners already running it in production are reporting real numbers:

HubX integrated Nano Banana 2 into their face-editing workflows and saw a 74–76% reduction in latency — making their pipelines roughly 4x faster without sacrificing quality. For a consumer-facing app where every second of wait time matters, that's the difference between a product that feels instant and one that feels slow.

Other teams across e-commerce, advertising, and creative tooling are reporting similar results — the combination of faster generation, lower API cost (50% cheaper than Nano Banana Pro at 1K resolution), and higher instruction fidelity is unlocking use cases that were previously too expensive or too slow to ship.

Sources: Google Blog, TechCrunch, The Decoder, VentureBeat, 9to5Google — February 26, 2026